Real-Time Algorithms for Game-Theoretic Motion Planning and Control in Autonomous Racing using Near-Potential Function

Abstract

Autonomous racing extends beyond the challenge of controlling a racecar at its physical limits. Similar to human drivers, autonomous vehicles must also employ strategic maneuvers, such as overtaking and blocking, to gain an advantage over competitors. While modern control algorithms can achieve human-level performance in single-car scenarios, research on real-time algorithms for multi-car autonomous racing remains limited. To bridge this gap, we develop a game-theoretic modeling framework that incorporates the competitive aspects of autonomous racing, such as overtaking and blocking, through a novel policy parametrization, while operating the car at its limit. We propose an algorithmic approach to compute an approximate Nash equilibrium strategy for our game model, representing the optimal strategy for any autonomous vehicle in a competitive environment. Our approach leverages the framework of dynamic near-potential functions, enabling the real-time computation of the Nash equilibrium. Our approach comprises two phases: offline and online. During the offline phase, we use simulated racing data to learn a near-potential function that approximates utility changes for agents. This function facilitates the online computation of approximate Nash equilibria by maximizing its value. We evaluate our method in a head-to-head 3-car racing scenario, demonstrating superior performance over several existing baselines.

Website and Supplementary: https://sites.google.com/view/game-theoretic-racing

keywords:

Autonomous Multi-car Racing, Dynamic Near-Potential Game, Nash Equilibrium.1 Introduction

Autonomous racing is a challenging task in autonomous vehicle development, requiring efficient planning, reasoning, and action in high-speed, dynamic, and constrained environments—key for addressing edge cases in broader autonomous driving. Recent advances, such as deep reinforcement learning (RL), have enabled vehicles to outperform human drivers (Wurman et al., 2022; Kaufmann et al., 2023). However, challenges remain in optimizing strategies against other autonomous agents and in reducing the extensive training times required for achieving competitive performance.

A key challenge in multi-agent autonomous racing is developing real-time competitive strategies that consider the presence of other autonomous vehicles while maintaining high speeds (Betz et al., 2022). Algorithms must balance lap-time optimization with aggressive driving, collision avoidance, and dynamic responses to competitors. While existing research on single-agent racing focuses on computing race lines based on vehicle dynamics and track constraints (Rosolia et al., 2017; Balaji et al., 2020; Song et al., 2021; Herman et al., 2021; Liniger et al., 2015; Kabzan et al., 2020), these approaches cannot accommodate complexities of multi-agent settings, where interactions such as blocking and overtaking become crucial. To address these challenges, multi-agent racing requires strategies that account for the interdependent behaviors of agents, with frameworks like Nash equilibrium offering a way to anticipate and adapt to competitors’ actions in a competitive environment.

While several studies have investigated the computation of Nash equilibrium in autonomous racing, many limitations persist. Many works use kinematic vehicle models (Notomista et al., 2020; Wang et al., 2019a, 2021; Liniger and Lygeros, 2019; Jia et al., 2023; Williams et al., 2017; Sinha et al., 2020; Schwarting et al., 2021), which simplify vehicle dynamics but fail to capture the nonlinear tire forces that are critical for high-speed racing. Others rely on open-loop control via receding horizon techniques (Buyval et al., 2017; Jung et al., 2021; Wang et al., 2019a, 2021; Liniger and Lygeros, 2019; Jia et al., 2023; Schwarting et al., 2021; Zhu and Borrelli, 2023, 2024), focusing on finite-horizon planning at the expense of long-term strategies. Additionally, many methods assume two-player zero-sum dynamics (Wang et al., 2019a; Thakkar et al., 2022; Kalaria et al., 2023b) or two-team competition (Thakkar et al., 2024), which are insufficient for races involving more than two agents. Alternative approaches, such as Stackelberg games (Notomista et al., 2020; Liniger and Lygeros, 2019), iterative linear quadratic games (Schwarting et al., 2021), and local Nash equilibrium (Zhu and Borrelli, 2023, 2024), provide partial local solutions but do not fully capture the global competitive nature of multi-agent racing. In this work, we study the following key question:

How to design real-time algorithms for autonomous racing that approximate Nash equilibrium, while accounting for nonlinear tire forces, long horizon planning and accommodating competitive interaction in any number of competing vehicles?

We highlight three main contributions of this work toward answering the question posed above.

1. Modeling Contribution: We model the multi-agent interaction as an infinite-horizon discounted dynamic game and introduce a novel policy parametrization to enable competitive maneuvers. Specifically, we propose MPC-based policies that track a specially designed, parameterized reference trajectory, while avoiding other vehicles. This trajectory is derived by adjusting the optimal single-agent race-line to account for the presence of other agents, enabling competitive racing maneuvers such as overtaking and blocking. Additionally, we structure each agent’s immediate utility function to increase its relative progress along the track in comparison to other at each time-step. Moreover, our approach is modular with respect to the vehicle dynamics model. In this work, we use a nonlinear vehicle dynamics model that captures nonlinear tire forces, but the framework can also incorporate other advanced racing technologies, such as ”push-to-pass.” By designing the reference trajectory in this way, we integrate long-term strategic planning for optimizing lap times with tactical planning for effective competition with other cars.

2. Algorithmic Contribution: We present an algorithmic approach to compute Nash equilibrium of the dynamic game by leveraging the recent framework of dynamic near-potential functions (Guo et al., 2023; Maheshwari et al., 2024). Specifically, we compute a near-potential function that captures the change in each agent’s (long-term) value function resulting from unilateral deviations in its policy parameters. The structure of near-potential function allows us to approximate the Nash equilibrium as an optimizer of the potential function (refer Proposition 3.2). We leverage this structure to develop a two-phase algorithmic approach to approximate the Nash equilibrium, consisting of an offline and an online phase. In the offline phase, we learn a near-potential function using simulated game data. During the online phase, the agent observes the current state of the game and selects the policy parameters that maximize the potential function in that state. This approach enables autonomous agents to engage in competitive maneuvers with reduced computational demands.

3. Numerical Evaluation: We numerically validate the effectiveness of our approach by applying it to three-car autonomous racing scenarios. Our results show that the approximation gap of our learned potential function is small, enabling us to closely approximate Nash equilibrium strategies. We demonstrate that the maximizer of the potential function effectively captures competitive racing maneuvers. Furthermore, we show that our method outperforms opponent policies—obtained using iterated best response, and finite-horizon self-play reinforcement learning—in most cases.

Other Related Works.

Several works in the autonomous racing literature use the solution concept of (open-loop) generalized Nash equilibrium (e.g., (Wang et al., 2019a; Jia et al., 2023)) to incorporate hard collision avoidance constraints, which introduces added computational complexity. In contrast, our modeling framework incorporates collision avoidance in two ways: through the MPC controller used to track the reference trajectory, and by slowing down vehicles when they enter a pre-defined radius around each other.

Our algorithmic approach also contributes to the growing literature on computational game theory for multi-robot systems (Laine et al., 2021; Cleac’h et al., 2019; Fridovich-Keil et al., 2020; Liu et al., 2023; Peters et al., 2024; Kavuncu et al., 2021; Sadigh et al., 2016; Wang et al., 2020a) by offering a new method to compute approximate Nash equilibrium.

Organization.

In Section 2, we describe our model of multi-car racing as a dynamic game, including the policy parametrization, utility function, and vehicle dynamics model. In Section 3, we present our algorithmic approach. In Section 4, we evaluate the performance of our approach using a numerical racing simulator. Finally, we conclude with remarks in Section 5.

2 Modeling Multi-car Autonomous Racing

In this section, we first present the necessary preliminaries on dynamic game theory, followed by a novel model of multi-car autonomous racing as an instantiation of a dynamic game.

2.1 Preliminaries on Dynamic Game Theory

Consider a game involving strategic players, with the set of players denoted as . The game proceeds in discrete time steps, indexed by . At each time step , the state of player is represented as , where is the set of all possible states for player . The joint state of all players at time is denoted by , where . At every time step , each player selects an action , where is the set of feasible actions for player . The joint action at time is expressed as , where .

For each player , given the current state and the joint action , the state transitions to a new state at time according to the dynamics where describes the state transition dynamics of player . Finally, at each time step , player receives a reward , which depends on the current and next joint state, and joint action of all players. We assume that players select their actions based on a state feedback strategy, denoted by , such that . The set of strategies of player is denoted by , and the set of joint strategies by . In our study, we consider a parameterized set of policies. Specifically, for any player , the player adopts a policy , where represents the policy parameter. We consider non-myopic players who aim to maximize their long-term discounted utility starting from any state . Specifically, each player selects a parameter to optimize the discounted infinite-horizon objective, where is the discount factor, , , , and the state transitions according to .

Definition 2.1.

For every , a joint strategy profile is called a -Nash equilibrium if, for every , If , the strategy profile is referred to simply as a Nash equilibrium.

2.2 Multi-car Racing as a Dynamic Game

In this subsection, we formulate the multi-car racing problem as a dynamic game by detailing the various components of the game.

Set of Players. Each car is modeled as a strategic player in the dynamic game.

Set of States and Actions. For every car , the state , where denote the longitudinal and lateral position of car in the Frenet frame along the track; denotes the orientation of the car in the Frenet frame along the track; denote the longitudinal and lateral velocities of car in the Frenet frame; and denotes the angular velocity of car in the body frame. Additionally, , where denotes the steering angle of car and is the throttle input of car .

Dynamics. The most widely used dynamics models in the context of racing are the kinematic (Jia et al., 2023; Notomista et al., 2020; Wang et al., 2019b, 2021) and dynamic bicycle models (Kalaria et al., 2023a; Brunke, 2020). In this work, we use the dynamic model as it can accurately model the high-speed maneuvers of the car. A detailed vehicle model description is in Supplementary. On top of the standard dynamic bicycle model, we also incorporate near-collision behavior in our dynamics. Suppose two cars, and , are within an unsafe distance from each other and , we reduce their velocities to replicate the time lost due to collision in an actual race with more penalty for the car behind than the one ahead. More concretely, we update the dynamics as and . Moreover, if a car goes out of track boundary, i.e., when (where is the width of track) we penalize it by reducing its speed and re-align the car along the track. More concretely, we update the dynamics as and .

Policy Parametrization. In this section, we introduce a novel policy parametrization designed to capture competitive driving behaviors, such as overtaking, blocking, apex hugging, late braking, and early acceleration. To achieve this, we restrict the set of policies to MPC controllers that track a reference trajectory specifically designed to encode such competitive behaviors in multi-car racing. For each car , the parametrization of the MPC controller and its reference trajectory is represented by , which is characterized by five variables: . Before describing these parameters in detail, we present the MPC controller.

At each time step , for every car , the MPC controller is determined by solving an optimization problem (cf. (1)) over a planning horizon of steps, indexed by . The optimization is parameterized by: the longitudinal and lateral positions on the reference trajectory (to be defined shortly), denoted by ; the current state of car at time , ; and the longitudinal and lateral positions of the opponent cars located just behind and just ahead of car . We use the notation to denote the car in front and the car behind car at the start of the planning window, based on longitudinal position. For any opponent vehicle , we assume that the lateral velocity is zero during the planning horizon for the MPC controller, and the longitudinal velocity remains constant at its value at the start of the planning horizon. That is, , and . With this setup, we can now describe the MPC optimization problem:

| (1a) | ||||

| s.t. | (1b) | |||

| (1c) | ||||

| (1d) | ||||

| (1e) | ||||

| (1f) | ||||

| (1g) | ||||

| (1h) | ||||

where is the state of car in the Frenet frame at the step in the planning horizon; is the control input of car at the step in the planning horizon; is the half-track length, and and are the minimum required separation between two cars in the longitudinal and lateral directions, respectively; and are the throttle limits, and and are the steering rate limits; and Q and R are positive definite matrices. Following the MPC approach, the control input at time is then .

In (1), (1a) defines the MPC objective, where the first term penalizes the tracking error relative to the reference trajectory, and the second term penalizes variations in the control input. (1b) represents the system dynamics constraint, while (1c) ensures the planning horizon begins at the car’s current state. (1d) and (1e) enforce constraints on the control inputs, including throttle limits and steering rate bounds. (1f) ensures the car stays within the track boundaries, and (1g) and (1h) enforce the minimum separation between vehicles in the lateral and longitudinal directions, respectively. Next, we describe the policy parameter , which parametrizes the policy.

Parameter : In (1a), we take and , where is the identity matrix. A higher value results in a more aggressive controller that closely follows the racing line, but it can introduce oscillations that may increase lap time. In contrast, a lower value allows for smoother merging with reduced oscillations, though it may result in larger lateral errors, increased time loss at corners, and a higher risk of track boundary violations.

(ii) Parameter : We use this parameter to develop a perturbed version of the optimal (single-agent) race-line (see Supplementary for a discussion on race-line), which is generated by sampling points along the optimal racing line at time intervals of . More formally, let the optimal race-line be denoted by . Given the current state , we find the closest point on the race-line and consider a race-line starting at time , denoted by . Using this, we compute a trajectory of length , denoted by . Specifically, for every , we construct:

where and are the perturbed race-line velocities. The parameter captures how aggressively the vehicle wants to follow the optimal race-line.

(iii) Parameters : The reference trajectory, , is generated by modifying the perturbed race-line by accounting for the positions and velocities of the cars immediately ahead (in terms of longitudinal coordinates) of the ego car and immediately behind (in terms of longitudinal coordinates) ego car. Let’s denote the ego car by , the car immediately ahead of this car by , and the one immediately behind by . We define the reference trajectory as follows where

is an adjustment for overtaking that smoothly changes the trajectory of the ego vehicle opposite the leading vehicle to overtake. Here, for any , , and

is the adjustment for blocking that smoothly changes the trajectory of the ego vehicle towards trailing vehicle to block it. Here, for any ,

One-step Utility Function. We consider that the one-step utility for every car is to maximize progress along track:

3 Approximating Multi-agent Interaction

In this section, we provide a tractable approach to compute an approximate Nash equilibrium for the racing game described in Section 2. Core to our approach is the framework of near-potential functions, recently introduced in Guo et al. (2023); Maheshwari et al. (2024).

3.1 Near-Potential Function

Definition 3.1 (Guo et al. (2023)).

A potential function is called a dynamic near-potential function with approximation parameter if for every ,

| (2) |

This definition intuitively requires that for any agent, the change in its value function resulting from a unilateral adjustment to its policy parameter can be closely approximated by the corresponding change in the dynamic near-potential function. This property allows us to approximate the Nash equilibrium as an optimizer of the near-potential function (as proved in Appendix).

Proposition 3.2.

For every , any policy such that , for some , then is an approximate Nash equilibrium.

3.2 Computational Approach

Our approach for real-time approximation of Nash equilibrium relies on two phases: offline and online. In the offline phase, we learn a near-potential function using simulated game data. In the online phase, the ego vehicle updates its policy parameters by optimizing the potential function.

Offline Phase: We parameterize the potential function through using a feed-forward neural network with ReLU activation and a BatchNorm layer added at the beginning. More concretely, we define the parametrized potential function as , where denotes the weights of neural network. Using Definition 3.1, we cast the problem of learning potential function as a semi-infinite program as shown below:

| (3) | ||||

| s.t. | ||||

where we use a neural network (same architecture as potential function), with parameters , to estimate the value function for every . Let be a solution of the above optimization problem. The main challenge with solving (3) is that the there are un-countably many constraints, one constraint corresponding to each value of initial state and policy parameter. Therefore, we numerically solve (3) by using simulated game data with randomly chosen starting position and policy parameters. Details of simulated game are discussed in next section.

Online Phase: Leveraging Proposition 3.2, the ego vehicle optimizes the learned potential function, i.e. , to approximate the Nash equilibrium policy parameter. More formally, given the current state , the ego vehicle computes using a non-linear optimization solver. Using , the ego vehicle takes action .

4 Numerical Evaluation

Here, we evaluate our approach on a numerical simulator by focusing on three questions: (Q1) Can our approach closely approximate Nash equilibrium and generate competitive behavior? (Q2) How do hyperparameters like the discount factor and the amount of data used to learn the near-potential function affect the performance? (Q3) How does our approach compare against common baselines?

Experimental Setup: We generate a dataset of 4000 races, each lasting 50 seconds, conducted with randomly chosen policy parameters and involving 3 cars. This dataset is used to first train value function estimators , , and for each of the cars. These are then used to learn using (3). To maximize the learned potential function, we use gradient descent with a learning rate of and warm-start by using the solution from the previous time step.

4.1 Competitive Behavior by Approximating Nash Equilibrium

We observe that the approximation gap of the learned potential function is small. As shown in Figure 1(a), the approximation gap across all states and policy parameters used in the training samples remains within 10% of the value function’s range, with a median gap of approximately 2%. Next, we demonstrate that the optimization solver effectively computes the maximizer of the potential function, leading to a lower Nash approximation error. In particular, Figure 1(b) shows the Nash regret for the ego agent, defined as where is the optimizer of the potential function with the starting state . The regret is plotted for different game states during a race, and we observe that it remains within 3% of the range of the value function. In summary, Figure 1 highlights that the dynamic game is a near-potential game with a low approximation gap, and that we accurately compute a near-optimal solution to the potential function.

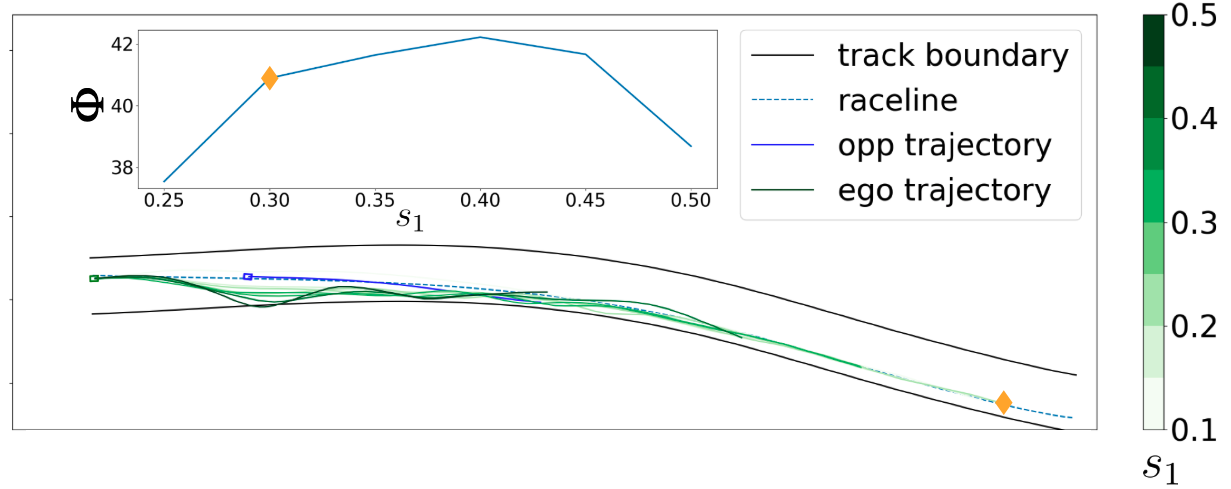

Next, in Figure 2, we show that, by fixing all policy parameters but one, the parameter that (approx.) maximizes potential function generates a trajectory that maximizes progress along the track over the next 5 seconds. This is highlighted by yellow diamond in Figure 2.

4.2 Performance Comparison

To address questions Q2 and Q3, we conduct 99 races involving three agents: ego, , and . Here, ego represents the agent using our proposed algorithm, while and are opponents employing other algorithms. In this study, we vary the opponents’ algorithms and compute the winning fraction for the ego car. A visualization of the track used in the study, along with the computed optimal race-line, is provided in the Appendix (Figure 4). The starting positions for the races are taken from three regions (denoted by , , and ), such that is the furthest ahead on the track, followed by , and then , as shown in the Appendix (Figure 4). We perform 33 races, each with the ego agent starting in , , or . Also, is always placed ahead of .

For the ego, we use a discount factor and a training dataset comprising races. Below, we summarize five different opponent strategies for and :

Case I: Opponents trained with a lower discount factor than ego (i.e., );

Case II: Opponents trained with a higher discount factor than ego (i.e., );

Case III: Opponents trained using fewer simulated races than ego (i.e., races);

Case IV: Opponents use the Iterated Best Response (IBR) algorithm 111The IBR algorithm used here is representative of the methods developed in Spica et al. (2020); Wang et al. (2020b), though it may not be an exact implementation, as the original code is not publicly available., which computes the best response of opponents in round-robin for a fixed number of rounds (i.e., 6), with a planning horizon222We select the horizon length as and the number of rounds as 6 such that it roughly takes the same compute time as our approach. of length ;

Case V: Opponents trained using self-play RL333Here, self-play RL represents the approach in Thakkar et al. (2022), excluding the high-level tactical planner proposed in their work.. We use a similar observation and reward as used in Thakkar et al. (2022) and train with steps.

The number of races won for all cases are provided in Table 1, where we see that ego agent trained using our approach has superior performance in comparison to all other opponent strategies.

Performance Variation with Hyperparameters (Cases I–III). The ego car outperforms opponents with lower discount factors (Case I) because lower discount factors lead to more myopic behavior, causing opponents to prioritize short-term progress over long-term track performance. Similarly, the ego car also outperforms opponents with higher discount factors (Case II) as higher discount factors increase the ”effective horizon” of the game, which requires significantly more data to accurately approximate the value and potential functions. Furthermore, the ego car has superior performance against opponents trained with less data (Case III), as more data enables the learning of a more accurate near-potential function.

| Racing Scenario | # wins (ours) | # wins () | # wins () |

|---|---|---|---|

| Case I (Opponents with low ) | 61 | 28 | 10 |

| Case II (Opponents with high ) | 52 | 40 | 7 |

| Case III (Opponents trained with less data) | 76 | 16 | 7 |

| Case IV (Opponents using IBR) | 73 | 22 | 4 |

| Case V (Opponents trained using self-play RL) | 91 | 7 | 1 |

Comparison with opponents using IBR (Case IV). Our approach surpasses IBR for two key reasons. First, IBR may not always converge to a Nash equilibrium. Second, IBR’s real-time computation is often restricted to local planning with a short horizon, as noted in Spica et al. (2020); Wang et al. (2019a). In contrast, competitive racing demands prioritizing a global racing line to optimize long-term performance, with strategic deviations for overtaking or blocking rather than short-term gains. This distinction is highlighted in Figure 3, where the IBR player achieves higher straight-line speeds to overtake but struggles in turns due to aggressive braking. While our implementation is not a direct comparison with Spica et al. (2020), our approach supports longer-horizon planning for real-time control by leveraging offline potential function learning. This is achieved by constraining the policy space to primitive behaviors, closely aligning with practical racing strategies.

Comparison with opponents using self-play RL (Case V). Our approach also outperforms opponents trained via self-play RL. While we do not claim an optimized implementation of self-play RL—acknowledging potential improvements through further training, hyperparameter tuning, or tactical enhancements as in Thakkar et al. (2022)—our method offers clear advantages. It delivers interpretable solutions grounded in realistic racing strategies and approximation guarantees to a Nash equilibrium in races with more than 2 cars. In contrast, self-play RL often requires additional insights to develop effective policies. For example, Thakkar et al. (2022) showed that augmenting self-play RL with high-level tactical plans significantly enhances policy learning.

5 Conclusion

In this work, we study real-time algorithms for competitive multi-car autonomous racing. Our approach is built on two key contributions: first, a novel policy parametrization and utility function that effectively capture competitive racing behavior; second, the use of Markov near-potential games to develop real-time algorithms that approximate Nash equilibrium strategies. This framework enables the learning of equilibrium strategies over long horizons at different game states through the maximization of a potential function.

There are several directions of future research. One interesting direction is to integrate the strengths of self-play RL (discussed in Thakkar et al. (2022) with our approach). For instance, potential function maximization could guide self-play RL as a high-level reference plan generator. Alternatively, our method could initialize self-play policies via behavior cloning or refined reward design, such as using the potential function’s maximum value as a reward signal or leveraging GAIL Ho and Ermon (2016) with expert trajectories derived from our approach.

References

- Balaji et al. (2020) Bharathan Balaji, Sunil Mallya, Sahika Genc, Saurabh Gupta, Leo Dirac, Vineet Khare, Gourav Roy, Tao Sun, Yunzhe Tao, Brian Townsend, et al. Deepracer: Autonomous racing platform for experimentation with sim2real reinforcement learning. In 2020 IEEE international conference on robotics and automation (ICRA), pages 2746–2754. IEEE, 2020.

- Betz et al. (2022) Johannes Betz, Hongrui Zheng, Alexander Liniger, Ugo Rosolia, Phillip Karle, Madhur Behl, Venkat Krovi, and Rahul Mangharam. Autonomous vehicles on the edge: A survey on autonomous vehicle racing. IEEE Open Journal of Intelligent Transportation Systems, 3:458–488, 2022.

- Brunke (2020) Lukas Brunke. Learning model predictive control for competitive autonomous racing. ArXiv, abs/2005.00826, 2020. URL https://api.semanticscholar.org/CorpusID:218487458.

- Buyval et al. (2017) Alexander Buyval, Aidar Gabdulin, Ruslan Mustafin, and Ilya Shimchik. Deriving overtaking strategy from nonlinear model predictive control for a race car. In 2017 IEEE/RSJ international conference on intelligent robots and systems (IROS), pages 2623–2628. IEEE, 2017.

- Cleac’h et al. (2019) Simon Le Cleac’h, Mac Schwager, and Zachary Manchester. Algames: A fast solver for constrained dynamic games. arXiv preprint arXiv:1910.09713, 2019.

- Fridovich-Keil et al. (2020) David Fridovich-Keil, Ellis Ratner, Lasse Peters, Anca D Dragan, and Claire J Tomlin. Efficient iterative linear-quadratic approximations for nonlinear multi-player general-sum differential games. In 2020 IEEE international conference on robotics and automation (ICRA), pages 1475–1481. IEEE, 2020.

- Guo et al. (2023) Xin Guo, Xinyu Li, Chinmay Maheshwari, Shankar Sastry, and Manxi Wu. Markov -potential games: Equilibrium approximation and regret analysis. arXiv preprint arXiv:2305.12553, 3, 2023.

- Heilmeier et al. (2020) Alexander Heilmeier, Alexander Wischnewski, Leonhard Hermansdorfer, Johannes Betz, Markus Lienkamp, and Boris Lohmann. Minimum curvature trajectory planning and control for an autonomous race car. Vehicle System Dynamics, 58(10):1497–1527, 2020. 10.1080/00423114.2019.1631455.

- Herman et al. (2021) James Herman, Jonathan Francis, Siddha Ganju, Bingqing Chen, Anirudh Koul, Abhinav Gupta, Alexey Skabelkin, Ivan Zhukov, Max Kumskoy, and Eric Nyberg. Learn-to-race: A multimodal control environment for autonomous racing. In proceedings of the IEEE/CVF International Conference on Computer Vision, pages 9793–9802, 2021.

- Ho and Ermon (2016) Jonathan Ho and Stefano Ermon. Generative adversarial imitation learning. In Neural Information Processing Systems, 2016. URL https://api.semanticscholar.org/CorpusID:16153365.

- Jain and Morari (2020) Achin Jain and Manfred Morari. Computing the racing line using Bayesian optimization. 2020 59th IEEE Conference on Decision and Control (CDC), pages 6192–6197, 2020.

- Jia et al. (2023) Yixuan Jia, Maulik Bhatt, and Negar Mehr. Rapid: Autonomous multi-agent racing using constrained potential dynamic games. In 2023 European Control Conference (ECC), pages 1–8. IEEE, 2023.

- Jung et al. (2021) Chanyoung Jung, Seungwook Lee, Hyunki Seong, Andrea Finazzi, and David Hyunchul Shim. Game-theoretic model predictive control with data-driven identification of vehicle model for head-to-head autonomous racing. arXiv preprint arXiv:2106.04094, 2021.

- Kabzan et al. (2020) Juraj Kabzan, Miguel I Valls, Victor JF Reijgwart, Hubertus FC Hendrikx, Claas Ehmke, Manish Prajapat, Andreas Bühler, Nikhil Gosala, Mehak Gupta, Ramya Sivanesan, et al. Amz driverless: The full autonomous racing system. Journal of Field Robotics, 37(7):1267–1294, 2020.

- Kalaria et al. (2023a) Dvij Kalaria, Qin Lin, and John M. Dolan. Adaptive planning and control with time-varying tire models for autonomous racing using extreme learning machine. ArXiv, abs/2303.08235, 2023a. URL https://api.semanticscholar.org/CorpusID:257532643.

- Kalaria et al. (2023b) Dvij Kalaria, Qin Lin, and John M Dolan. Towards optimal head-to-head autonomous racing with curriculum reinforcement learning. arXiv preprint arXiv:2308.13491, 2023b.

- Kaufmann et al. (2023) Elia Kaufmann, Leonard Bauersfeld, Antonio Loquercio, Matthias Müller, Vladlen Koltun, and Davide Scaramuzza. Champion-level drone racing using deep reinforcement learning. Nature, 620(7976):982–987, 2023.

- Kavuncu et al. (2021) Talha Kavuncu, Ayberk Yaraneri, and Negar Mehr. Potential ilqr: A potential-minimizing controller for planning multi-agent interactive trajectories. arXiv preprint arXiv:2107.04926, 2021.

- Laine et al. (2021) Forrest Laine, David Fridovich-Keil, Chih-Yuan Chiu, and Claire Tomlin. Multi-hypothesis interactions in game-theoretic motion planning. In 2021 IEEE International Conference on Robotics and Automation (ICRA), pages 8016–8023. IEEE, 2021.

- Liniger and Lygeros (2019) Alexander Liniger and John Lygeros. A noncooperative game approach to autonomous racing. IEEE Transactions on Control Systems Technology, 28(3):884–897, 2019.

- Liniger et al. (2015) Alexander Liniger, Alexander Domahidi, and Manfred Morari. Optimization-based autonomous racing of 1: 43 scale rc cars. Optimal Control Applications and Methods, 36(5):628–647, 2015.

- Liu et al. (2023) Xinjie Liu, Lasse Peters, and Javier Alonso-Mora. Learning to play trajectory games against opponents with unknown objectives. IEEE Robotics and Automation Letters, 8(7):4139–4146, 2023.

- Maheshwari et al. (2024) Chinmay Maheshwari, Manxi Wu, and Shankar Sastry. Decentralized learning in general-sum Markov games. IEEE Control Systems Letters, 2024.

- Notomista et al. (2020) Gennaro Notomista, Mingyu Wang, Mac Schwager, and Magnus Egerstedt. Enhancing game-theoretic autonomous car racing using control barrier functions. In 2020 IEEE international conference on robotics and automation (ICRA), pages 5393–5399. IEEE, 2020.

- Peters et al. (2024) Lasse Peters, Andrea Bajcsy, Chih-Yuan Chiu, David Fridovich-Keil, Forrest Laine, Laura Ferranti, and Javier Alonso-Mora. Contingency games for multi-agent interaction. IEEE Robotics and Automation Letters, 2024.

- Rosolia and Borrelli (2020) Ugo Rosolia and Francesco Borrelli. Learning how to autonomously race a car: A predictive control approach. IEEE Transactions on Control Systems Technology, 28:2713–2719, 2020.

- Rosolia et al. (2017) Ugo Rosolia, Ashwin Carvalho, and Francesco Borrelli. Autonomous racing using learning model predictive control. In 2017 American control conference (ACC), pages 5115–5120. IEEE, 2017.

- Sadigh et al. (2016) Dorsa Sadigh, Shankar Sastry, Sanjit A Seshia, and Anca D Dragan. Planning for autonomous cars that leverage effects on human actions. In Robotics: Science and systems, volume 2, pages 1–9. Ann Arbor, MI, USA, 2016.

- Schwarting et al. (2021) Wilko Schwarting, Alyssa Pierson, Sertac Karaman, and Daniela Rus. Stochastic dynamic games in belief space. IEEE Transactions on Robotics, 37(6):2157–2172, 2021.

- Sinha et al. (2020) Aman Sinha, Matthew O’Kelly, Hongrui Zheng, Rahul Mangharam, John Duchi, and Russ Tedrake. Formulazero: Distributionally robust online adaptation via offline population synthesis. In International Conference on Machine Learning, pages 8992–9004. PMLR, 2020.

- Song et al. (2021) Yunlong Song, Mats Steinweg, Elia Kaufmann, and Davide Scaramuzza. Autonomous drone racing with deep reinforcement learning. In 2021 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pages 1205–1212. IEEE, 2021.

- Spica et al. (2020) Riccardo Spica, Eric Cristofalo, Zijian Wang, Eduardo Montijano, and Mac Schwager. A real-time game theoretic planner for autonomous two-player drone racing. IEEE Transactions on Robotics, 36(5):1389–1403, 2020.

- Thakkar et al. (2022) Rishabh Saumil Thakkar, Aryaman Singh Samyal, David Fridovich-Keil, Zhe Xu, and Ufuk Topcu. Hierarchical control for head-to-head autonomous racing. arXiv preprint arXiv:2202.12861, 2022.

- Thakkar et al. (2024) Rishabh Saumil Thakkar, Aryaman Singh Samyal, David Fridovich-Keil, Zhe Xu, and Ufuk Topcu. Hierarchical control for cooperative teams in competitive autonomous racing. IEEE Transactions on Intelligent Vehicles, 2024.

- Vesel (2015) Richard W. Vesel. Racing line optimization @ race optimal. ACM SIGEVOlution, 7:12 – 20, 2015.

- Wang et al. (2019a) Mingyu Wang, Zijian Wang, John Talbot, J Christian Gerdes, and Mac Schwager. Game theoretic planning for self-driving cars in competitive scenarios. In Robotics: Science and Systems, pages 1–9, 2019a.

- Wang et al. (2020a) Mingyu Wang, Negar Mehr, Adrien Gaidon, and Mac Schwager. Game-theoretic planning for risk-aware interactive agents. In 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pages 6998–7005. IEEE, 2020a.

- Wang et al. (2021) Mingyu Wang, Zijian Wang, John Talbot, J Christian Gerdes, and Mac Schwager. Game-theoretic planning for self-driving cars in multivehicle competitive scenarios. IEEE Transactions on Robotics, 37(4):1313–1325, 2021.

- Wang et al. (2019b) Zijian Wang, Riccardo Spica, and Mac Schwager. Game theoretic motion planning for multi-robot racing. In Distributed Autonomous Robotic Systems: The 14th International Symposium, pages 225–238. Springer, 2019b.

- Wang et al. (2020b) Zijian Wang, Tim Taubner, and Mac Schwager. Multi-agent sensitivity enhanced iterative best response: A real-time game theoretic planner for drone racing in 3d environments. Robotics Auton. Syst., 125:103410, 2020b. URL https://api.semanticscholar.org/CorpusID:211050763.

- Williams et al. (2017) Grady Williams, Brian Goldfain, Paul Drews, James M Rehg, and Evangelos A Theodorou. Autonomous racing with autorally vehicles and differential games. arXiv preprint arXiv:1707.04540, 2017.

- Wurman et al. (2022) Peter R Wurman, Samuel Barrett, Kenta Kawamoto, James MacGlashan, Kaushik Subramanian, Thomas J Walsh, Roberto Capobianco, Alisa Devlic, Franziska Eckert, Florian Fuchs, et al. Outracing champion gran turismo drivers with deep reinforcement learning. Nature, 602(7896):223–228, 2022.

- Zhu and Borrelli (2023) Edward L Zhu and Francesco Borrelli. A sequential quadratic programming approach to the solution of open-loop generalized nash equilibria. In 2023 IEEE International Conference on Robotics and Automation (ICRA), pages 3211–3217. IEEE, 2023.

- Zhu and Borrelli (2024) Edward L Zhu and Francesco Borrelli. A sequential quadratic programming approach to the solution of open-loop generalized nash equilibria for autonomous racing. arXiv preprint arXiv:2404.00186, 2024.

Appendix A Proof of Proposition 3.2

Appendix B Table of Notations

| Notation | Description |

|---|---|

| State vector of car , including position, velocity, and angular velocity. | |

| Longitudinal and lateral positions of car in the global frame. | |

| Orientation of car in the global frame. | |

| Longitudinal and lateral velocities of car in the body frame. | |

| Angular velocity of car in the global frame. | |

| Control input for car , where is the throttle and is the steering angle. | |

| Throttle input of car . | |

| Steering angle of car . | |

| Minimum and maximum throttle limits for car . | |

| Minimum and maximum steering angle limits for car . | |

| Mass of car . | |

| Moment of inertia of car in the vertical direction about the center of mass. | |

| Distance from the center of mass to the front wheel of car . | |

| Distance from the center of mass to the rear wheel of car . | |

| Force applied to the rear wheel of car in the longitudinal direction at time . | |

| Force applied to the rear wheel of car in the lateral direction at time . | |

| Force applied to the front wheel of car in the lateral direction at time . | |

| Time step for the simulation. | |

| Discount factor in the utility function. | |

| State of the system at time . | |

| Control input of car at time . | |

| Angular velocity of car at time . | |

| Parameter of car ’s policy. | |

| Set of possible parameters for car ’s policy. | |

| Set of possible strategies for car . | |

| Set of joint strategies for all players (cars). | |

| The tolerance used in the definition of -Nash equilibrium. | |

| The expected long-run utility of car given the state and strategy profile . | |

| The -Nash equilibrium strategy profile. |

Appendix C Description of Single-agent Racing Line

Race drivers follow a racing line for specific maneuvers. This line can be used as a reference path by the motion planner to assign time-optimal trajectories while avoiding collision. The racing line is minimum-time or minimum-curvature. They are similar, but the minimum-curvature path additionally allows the highest cornering speeds given the maximum legitimate lateral acceleration Heilmeier et al. (2020).

There are many proposed solutions to finding the optimal racing line, including nonlinear optimization Rosolia and Borrelli (2020); Heilmeier et al. (2020), genetic algorithm-based search Vesel (2015) and Bayesian optimization Jain and Morari (2020). However, for our work, we calculate the minimum-curvature optimal line, which is close to the optimal racing line as proposed by Heilmeier et al. (2020). The race track is represented by a sequence of tuples (,,), , where (,) denotes the coordinate of the center location and denotes the lane width at the -th point. The output racing line consists of a tuple of seven variables: coordinates and , longitudinal displacement , longitudinal velocity , acceleration , heading angle , and curvature . It is obtained by minimizing the following cost:

| (4) |

where the vehicle width is , and is the lateral displacement with respect to the reference center line.

To create a velocity profile, we need to consider the vehicle’s constraints on both longitudinal and lateral acceleration Heilmeier et al. (2020). Our approach involves generating a library of velocity profiles, each tailored to specific lateral acceleration limits determined by the friction coefficients for the front () and rear () tires, as well as the vehicle’s mass () and the gravitational constant (). In particular, we produce a set of velocity profiles covering a range of maximum lateral forces corresponding to the friction within the interval . This library allows us to retrieve a velocity profile that matches a given value of . Interpolation is necessary when we encounter a friction value that falls within the valid range but is not explicitly present in the library.

An example of a racing line calculated for the racetrack used in our numerical study in Section 4 is shown in Figure 4.

Appendix D Dynamic Bicycle Model

For any car , we denote its mass by , its moment of inertia in the vertical direction about the center of mass by , the distance between the center of mass (COM) and its front wheel by , and the distance from the COM to the rear wheel . Also, denotes the inverse of radius of curvature of the track at . Using these notations, the dynamics of car is defined below:

| (5) |

where , are the velocities in frenet frame; are velocities in body frame; is the longitudinal force on the rear tire at time . Here, and are parameters that govern the longitudinal force generated on the car in response to the throttle command, while and are parameters that account for the friction and drag forces acting on the car; is the lateral force on the front tire depending on the slipping angle , which is given by . Here are the parameters of Pacejka tire model; and is the lateral force on the rear tire depending on the slipping angle , which is given by . Here are the parameters of Pacejka tire model.