MSLKANet: A Multi-Scale Large Kernel Attention Network for Scene Text Removal

Abstract

Scene text removal aims to remove the text and fill the regions with perceptually plausible background information in natural images. It has attracted increasing attention due to its various applications in privacy protection, scene text retrieval, and text editing. With the development of deep learning, the previous methods have achieved significant improvements. However, most of the existing methods seem to ignore the large perceptive fields and global information. The pioneer method can get significant improvements by only changing training data from the cropped image to the full image. In this paper, we present a single-stage multi-scale network MSLKANet for scene text removal in full images. For obtaining large perceptive fields and global information, we propose multi-scale large kernel attention (MSLKA) to obtain long-range dependencies between the text regions and the backgrounds at various granularity levels. Furthermore, we combine the large kernel decomposition mechanism and atrous spatial pyramid pooling to build a large kernel spatial pyramid pooling (LKSPP), which can perceive more valid pixels in the spatial dimension while maintaining large receptive fields and low cost of computation. Extensive experimental results indicate that the proposed method achieves state-of-the-art performance on both synthetic and real-world datasets and the effectiveness of the proposed components MSLKA and LKSPP.

1 Introduction

Scene text images contain quite a lot of sensitive and private information, such as names, addresses, and cellphone numbers. With the increasing development of scene text detection and recognition technology, there is a high risk that the information collected automatically is used for illegal purposes. Therefore, a scene text removal task is proposed to solve this problem. Scene Text Removal aims at erasing text regions and filling the regions with perceptually plausible background information in natural images. It is also useful for privacy protection, image text editing [31], video text editing [25], and scene text retrieval [29].

| Method | PSNR | MSSIM | MSE | AGE | pEPs | pCEPs |

|---|---|---|---|---|---|---|

| croped image | 20.60 | 84.11 | 0.0233 | 14.4795 | 0.1304 | 0.0868 |

| full image | 30.43 | 94.02 | 0.0018 | 5.2371 | 0.0301 | 0.0231 |

Scene text removal is a challenging task because it inherits the difficulty of both scene text detection and image inpainting tasks. With the development of deep learning, CNN-based [21, 20, 30] and GAN-Based methods [33, 17, 26] have achieved significant improvements. However, both scene text detection and image inpainting need global information, this problem seems to be ignored. As shown in table 1, we can get significant improvements by changing the cropped image to a full image, which also can demonstrate the importance of large perceptive fields and global information. Previous methods propose to predict the text stroke [17, 30] or combine the text region mask [27, 26] as input to focus scene text removal only on these stroke regions to avoid the issue. In addition, many existing algorithms make few attempts to eliminate redundant feature information resulting in usually not better structure restoration and detail preservation while their receptive fields are limited and often cause inexhaustive erasure and unveracious inpainting due to lack of global information. Therefore, it is necessary to make full use of the text region features and the background features to explore the scale-space feature correlation from a global and local perspective.

To address these issues, we propose a novel one-stage Multi-Scale Large Kernel Attention scene text removal network (MSLKANet) that combines multi-scale feature extraction[23], large kernel attention [10], large kernel Decomposition, and spatial pyramid pooling [4, 11]. Specifically, we propose a Multi-Scale Large Kernel Attention (MSLKA) block that combines multi-scale mechanism and large kernel attention to build various long-range correlations with relatively few computations. Moreover, to get larger receptive fields, we proposed a Large Kernel Spatial Pyramid Pooling(LKSPP) block, which was implemented by replacing the atrous convolution with decomposed large kernel convolution. More importantly, our proposed blocks are general in the sense that they could be easily applied to any network.

We conducted extensive experiments on two benchmark datasets: SCUT-EnsText [17] and SCUT-syn [33]. Both the qualitative and quantitative results demonstrate that MSLKANet can outperform previous state-of-the-art STR methods. The proposed MSLKA and LKSPP blocks can be used for other STR models for performance enhancement.

We summarize the contributions of this work as follows:

-

•

We propose multi-scale large kernel attention (MSLKA) to obtain long-range dependencies between the text regions and the backgrounds at various granularity levels, therefore significantly improving the model representation capability.

-

•

We integrate the large kernel decomposition mechanism and atrous spatial pyramid pooling to build a large kernel spatial pyramid pooling (LKSPP), which can perceive more valid pixels in the spatial dimension while maintaining large receptive fields and low cost of computation.

-

•

Extensive experiments on both real and synthetic datasets demonstrate the performance of MSLKANet, significantly outperforming existing SOTA methods on quantitative and qualitative results. Ablation studies demonstrate the effectiveness of the proposed MSLKA and LKSPP blocks.

2 Related works

Generally, the existing STR research can be classified into one-stage methods and two-stage methods. One-stage methods automatically detect the text regions and remove them in an end-to-end network. The method proposed in [21] is the first deep learning-based method to address the scene text removal task in an end-to-end way. It uses a single-stage U-shaped architecture with skip connections. However, their method only uses cropped image patches as input to train the network, which limits the equality of the scene text removal results due to the loss of global information. Scene Text removal can be taken as image-to-image translation. Inspired by Pix2pix [14], EnsNet [33] proposed a refined loss and a local-aware discriminator to ensure the background reconstruction and integrity of the non-text region. EraseNet proposed an additional segmentation head to help locate the text and introduce a coarse-to-refinement architecture from image inpainting. MTRNet++ [26] separately encoded the image content and text mask in two branches. Cho et al. [6] proposed a two-task blending structure that performs both text segmentation and background restoration at the same time. PERT[30] progressively performed scene text erasure with a region-based modification strategy to only modify the pixels in the predicted text regions. PSSTRNet[20] proposed a novel mask update module to get a more and more accurate text mask for region-based modification strategy and an adaptive fusion strategy to make full use of results from different iterations.

The two-step methods decompose the text-removal task into two sub-problems: text detection and background in painting. MTRNet [27] concat the scene text image and text region mask as input. Zdenek et al. [32] use the bounding boxes of text regions to train the text detection network and use the general natural scene images to train the inpainting network. This weak supervision method does not require paired training data. Conrad et al. [7] use a text detector to first get coarse text regions, and then use a segmentation network to refine the text pixels before the application of a pre-trainedEdgeConnect [22] for background inpainting. Bian et al. [2] proposed a cascaded GAN-based model, which decouples text stroke detection and stroke removal in text removal tasks.

3 Method

3.1 Framework Overview

Fig.1 shows the overall architecture of the proposed MSLKANet. It is based on the UNet-Style architecture with encoder, decoder, and skip-connections. Furthermore, we propose large kernel spatial pyramid pooling (LKSPP) and multi-scale large kernel attention (MSLKA) for obtaining long-range dependencies between the text regions and the backgrounds. In the loss functions, we use reconstruction loss, style loss [8], and perception loss [16] to keep the same with most existing methods.

3.2 multi-scale large kernel attention

Attention mechanisms can help networks focus on important information and ignore irrelevant ones. And multi-scale feature fusion methods effectively combine image features at different scales and are now widely used to collect useful information about objects and their surroundings. However, they seem to be ignored by previous methods. Compare with other attention mechanisms(Channel Attention [13], Self-Attention [28]), Large Kernel Attention [10] combines the advantages of convolution and self-attention. It takes the local contextual information, large receptive field, linear complexity, dynamic process, spatial dimension adaptability, and channel dimension adaptability into consideration.

Under the guidance of the above ideas, we propose multi-scale large kernel attention (MSLKA) to combine large kernel decomposition and multi-scale learning for obtaining heterogeneous-scale correlations between the text regions and the background regions.

Large Kernel Attention. LKA adaptively builds the long-range relationship by decomposing a convolution into a depth-wise dilation convolution with dilation , a depth-wise convolution and a 11 convolution. Through the above decomposition, it can capture a long-range relationship with slight computational cost and parameters.

Multi-Scale Large Kernel Attention. To learn the attention maps with multi-scale longe range information, we improve the LKA with the group-wise multi-scale mechanism. As shown in Fig.2, given the input feature maps , we split the input into different groups. For different groups, we use different dilation rates for various perceptive fields for multi-scale longe range information. In detail, we leverage four groups of LKA with a set of dilation rates. By setting a fix-size kernel 5 in each depth-wise -dilation convolution, we get a set of large kernels . Through the above convolutions, we get the four scale attention maps and then reweight the different groups of features. In the last, we concat the four groups of features as output.

3.3 large kernel spatial pyramid pooling

For obtaining large perceptive field and multi-scale information, Spatial Pyramid Pooling(SPP) is another frequently-used method. Atrous Spatial Pyramid Pooling(ASPP) proposed and improved in [4, 3, 5] is a most common and efficient block in different SPP methods(SPP[11], PPM[34], ASPP). In Deeplabv2[5], the ASPP block only includes several parallel atrous convolutions with different rates for getting multi-scale information and a 1x1 convolution. Image-level pooling ars insert into ASPP for obtaining global information in Deeplabv3 [4]. In Deeplabv3+[5], they use depthwise separable atrous convolution replacing the standard atrous convolution for reducing the cost of computation. Motivated by LKA and the development of ASPP, we use decomposed large kernel convolution to substitute the depthwise separable atrous convolution for the fusion of more valid pixels in the spatial dimension while maintaining large receptive fields and lower cost of computation. In detail, our large kernel spatial pyramid pooling (LKSPP) includes three parallel decomposed large kernel convolutions with different rates(3,4,5) and a fix-size kernel 7 in each depth-wise dilation convolution for getting multi-scale information, a 1x1 convolution, and an image-level pooling as shown in Fig.3, .

3.4 Loss Functions

We introduce three loss functions for training MSLKANet, including reconstruction loss, perceptual loss, and style loss. Given the input scene text image , text-removed ground truth (gt) , and the STR output from MSLKANet is denoted as .

Reconstruction Loss. First of all, we apply distance is proposed to measure the output and the ground truth in the pixel level.

| (1) |

Perceptual Loss. To capture the high-level semantic difference and simulate the human perception of image quality, we utilize the perceptual loss[16] in Eq.(2). is the activation features of the -th layer of the VGG-16 backbone.

| (2) |

Style Loss. We also use the style loss [14] for reducing the global level style of output and its corresponding ground-truths as Eq.(3), where is the Gram matrix constructed from the selected activation features in perceptual loss, and is mean-squared distance.

| (3) |

In Summary, the total loss for training MSLKANet is the weighted combination of the above mentioned losses:

| (4) |

In experiments, , is set to be 120,0.01, respectively.

4 Experiments and Results

4.1 Datasets and Evaluation Metrics

SCUT-Syn. It is a synthetic dataset that splits 8,000 images for training and 800 images for testing. More details can be found in [33].

SCUT-EnsText. It contains 2,749 training images and 813 test images which are collected in real scenes. More descriptions refer to [17].

Evaluation Metrics:. Following the previous methods [33, 17, 20] For detecting text on the output images, we employ a text detector CRAFT[1] to calculate recall and F-score. The lower, the better. PSNR, MSE, MSSIM, AGE, pEPs, and pCEPS are adopted for measuring the equality of the output images. The higher MSSIM and PSNR, and the lower AGE, pEPs, pCEPS, and MSE indicate better results.

4.2 Implementation Details

We train MSLKANet on the training set of SCUT-EnsText and SCUT-Syn and evaluate them on their corresponding testing sets. We follow [17] to apply data augmentation during the training stage. We apply a random rotation of a maximum degree of 10◦, random horizontal flip, and random vertical flip with a probability of 0.5 for data augmentation during training. The model is optimized by adamW optimizer with the weight decay of and the epison of . The initial learning rate is set to be . We also use the warmup strategy [9] that increases the learning rate from 0 to the initial learning rate linearly to overcome early optimization difficulties. After the learning rate warmup, we typically apply the cosine annealing strategy [19] to steadily decrease the value from the initial learning rate. The MSLKANet is trained by a single NVIDIA GPU 3090 with a batch size of 16 and the input image size of 256 256.

| Method | PSNR | MSSIM | MSE | AGE | pEPs | pCEPs |

| Baseline | 33.87 | 96.42 | 0.15 | 2.4561 | 0.0171 | 0.0112 |

| +MSLKA | 34.89 | 97.10 | 0.0013 | 1.8228 | 0.0142 | 0.0085 |

| +MSLKA+ASPP | 35.07 | 97.18 | 0.0013 | 1.7527 | 0.0133 | 0.082 |

| +MSLKA+LKSPP | 35.27 | 97.27 | 0.0012 | 1.6647 | 0.0129 | 0.0076 |

4.3 Ablation Study

| Method | Image-Eval | Detection-Eval(%) | |||||||

| PSNR | MSSIM | MSE | AGE | pEPs | pCEPs | P | R | F | |

| Original Images | - | - | - | - | - | - | 79.8 | 69.7 | 74.4 |

| Pix2pix | 26.75 | 88.93 | 0.0033 | 5.842 | 0.048 | 0.0172 | 71.3 | 36.5 | 48.3 |

| Scene Text Eraser | 20.60 | 84.11 | 0.0233 | 14.4795 | 0.1304 | 0.0868 | 52.3 | 14.1 | 22.2 |

| EnsNet | 29.54 | 92.74 | 0.0024 | 4.1600 | 0.2121 | 0.0544 | 68.7 | 32.8 | 44.4 |

| EraseNet | 31.62 | 95.05 | 0.0015 | 3.1264 | 0.0192 | 0.0110 | 54.1 | 8.0 | 14.0 |

| PERT | 33.25 | 96.95 | 0.0014 | 2.1833 | 0.0136 | 0.0088 | 52.7 | 2.9 | 5.4 |

| PSSTRNet | 34.65 | 96.75 | 0.0014 | 1.7161 | 0.0135 | 0.0074 | 47.7 | 5.1 | 9.3 |

| MSLKANet(Ours) | 35.27 | 97.27 | 0.0012 | 1.6647 | 0.0129 | 0.0076 | 51.3 | 3.8 | 7.0 |

| Method | PSNR | MSSIM | MSE | AGE | pEPs | pCEPs | Parameters |

| Pix2pix | 25.16 | 87.63 | 0.0038 | 6.8725 | 0.0664 | 0.0300 | 54.4M |

| Scene Text Eraser | 24.02 | 89.49 | 0.0123 | 10.0018 | 0.0728 | 0.0464 | 89.16M |

| EnsNet | 37.36 | 96.44 | 0.0021 | 1.73 | 0.0276 | 0.0080 | 12.4M |

| EraseNet | 38.31 | 97.68 | 0.0002 | 1.5982 | 0.0048 | 0.0009 | 19.74M |

| PERT | 39.40 | 97.87 | 0.0002 | 1.4149 | 0.0045 | 0.0006 | 14.00M |

| PSSTRNet | 39.25 | 98.15 | 0.0002 | 1.2035 | 0.0043 | 0.0008 | 4.88M |

| MSLKANet | 41.40 | 98.58 | 0.0001 | 0.9976 | 0.0026 | 0.0003 | 9.62M |

In this section, we verify the contributions of different components MSLKA and LKSPP on the SCUT-Text dataset. Our baseline model is implemented by a UNet-Style model similar to the pioneer method. In total, we conduct four experiments: 1. baseline, 2.baseline+MSLKA, 3.baseline+MSLKA+ASPP 4.baseline+MSLKA+LKSPP. All experiments use the same training and test settings.

MSLKA. MSLKA aims to obtain long-range dependencies between the text regions and the backgrounds at various granularity levels. As shown in table2, our MSLKA provides significant enrichments over the baseline on all metrics.

LKSPP. LKSPP is proposed to perceive more valid pixels in the spatial dimension while maintaining large receptive fields and lowering the cost of computation. It can also provide global information According to the results shown in table2, our LKSPP is helpful in both text removal and background in painting.

4.4 Comparison with State-of-the-Art Approaches

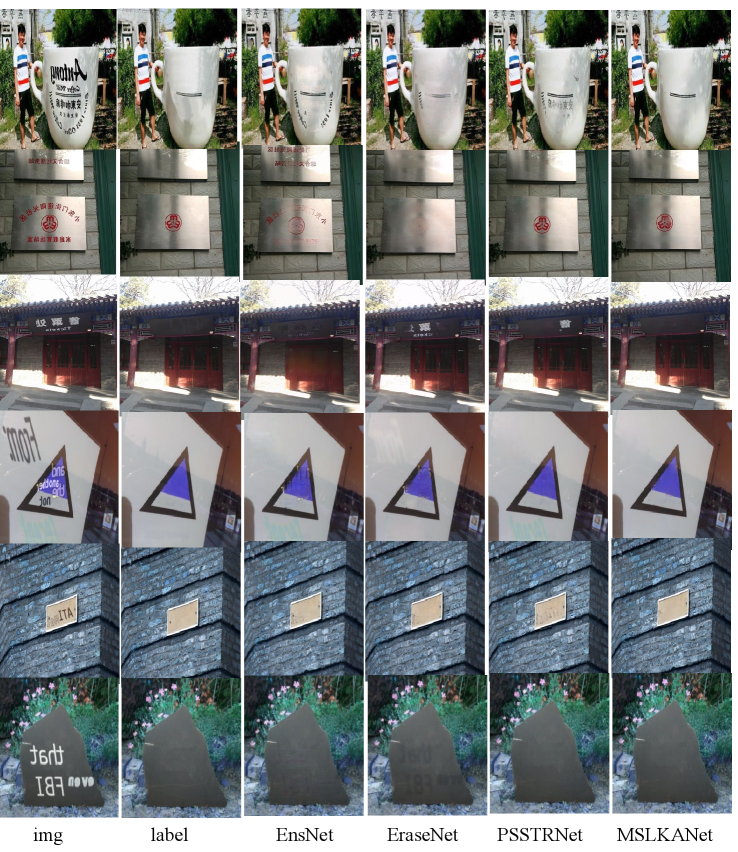

In this section, we compare our proposed MSLKANet with six state-of-the-art methods: Pix2pix[14], Scene Text Eraser[21], EnsNet[33], EraseNet[17], PERT [30] and PSSTRNet [20], on both SCUT-EnsText and SCUT-Syn datasets. Qualitative and quantitative results are illustrated in Fig.4, table3 and 4, respectively.

Qualitative Comparison. As shown in the 1st, 3rd, and 4th rows of Fig.4, our model can remove multi-scale texts, especially for the larger scale text. Compared with other state-of-the-art methods, the results of our proposed MSLKANet have significantly fewer color discrepancies and blurriness, and cleaner background. They demonstrate our model could generate more natural results on text removal and background inpainting results.

4.5 Generalization to general object removal

Following the important scene text removal network EnsNet[33], we also execute an experiment on the general object removal task to test the generalization ability of MSLKANet. We also evaluate MSLKANet on the well-known Scene Background Modeling (SBMnet) dataset [15], which is proposed for background estimation algorithms. The qualitative results are shown in Fig.5, our MSLKANet reconstructs a natural background. This means that our method can be generalized to remove pedestrians, cars, and fish without specific adjustments. It can certify the generalization of our proposed MSLKANet.

5 limitation

6 Conclusion

In this paper, we present a single-stage multi-scale network MSLKANet for scene text removal in full images. In experiments, we find that the pioneer method can get significant improvements by only changing training data from the cropped image to the full image. For obtaining large perceptive fields and global information, we propose multi-scale large kernel attention (MSLKA) to obtain long-range dependencies between the text regions and the backgrounds at various granularity levels. Furthermore, we combine the large kernel decomposition mechanism and atrous spatial pyramid pooling to build large kernel spatial pyramid pooling (LKSPP), which can fuse more valid pixels in the spatial dimension while maintaining large receptive fields and low cost of computation. Extensive experimental results indicate that the proposed method achieves state-of-the-art performance on both synthetic and real-world datasets and the effectiveness of the proposed components MSLKA and LKSPP.

References

- [1] Youngmin Baek, Bado Lee, Dongyoon Han, Sangdoo Yun, and Hwalsuk Lee. Character region awareness for text detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 9365–9374, 2019.

- [2] Xuewei Bian, Chaoqun Wang, Weize Quan, Juntao Ye, Xiaopeng Zhang, and Dong-Ming Yan. Scene text removal via cascaded text stroke detection and erasing. Computational Visual Media, 8(2):273–287, 2022.

- [3] Liang-Chieh Chen, George Papandreou, Iasonas Kokkinos, Kevin Murphy, and Alan L Yuille. Deeplab: Semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected crfs. IEEE transactions on pattern analysis and machine intelligence, 40(4):834–848, 2017.

- [4] Liang-Chieh Chen, George Papandreou, Florian Schroff, and Hartwig Adam. Rethinking atrous convolution for semantic image segmentation. arXiv preprint arXiv:1706.05587, 2017.

- [5] Liang-Chieh Chen, Yukun Zhu, George Papandreou, Florian Schroff, and Hartwig Adam. Encoder-decoder with atrous separable convolution for semantic image segmentation. In Proceedings of the European conference on computer vision (ECCV), pages 801–818, 2018.

- [6] Junho Cho, Sangdoo Yun, Dongyoon Han, Byeongho Heo, and Jin Young Choi. Detecting and removing text in the wild. IEEE Access, 9:123313–123323, 2021.

- [7] Benjamin Conrad and Pei-I Chen. Two-stage seamless text erasing on real-world scene images. In 2021 IEEE International Conference on Image Processing (ICIP), pages 1309–1313. IEEE, 2021.

- [8] Leon A Gatys, Alexander S Ecker, and Matthias Bethge. Image style transfer using convolutional neural networks. In Proceedings of the IEEE conference on computer vision and pattern recognition, pages 2414–2423, 2016.

- [9] Priya Goyal, Piotr Dollár, Ross Girshick, Pieter Noordhuis, Lukasz Wesolowski, Aapo Kyrola, Andrew Tulloch, Yangqing Jia, and Kaiming He. Accurate, large minibatch sgd: Training imagenet in 1 hour. arXiv preprint arXiv:1706.02677, 2017.

- [10] Meng-Hao Guo, Cheng-Ze Lu, Zheng-Ning Liu, Ming-Ming Cheng, and Shi-Min Hu. Visual attention network. arXiv preprint arXiv:2202.09741, 2022.

- [11] Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. Spatial pyramid pooling in deep convolutional networks for visual recognition. IEEE transactions on pattern analysis and machine intelligence, 37(9):1904–1916, 2015.

- [12] Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. Deep residual learning for image recognition. In Proceedings of the IEEE conference on computer vision and pattern recognition, pages 770–778, 2016.

- [13] Jie Hu, Li Shen, and Gang Sun. Squeeze-and-excitation networks. In Proceedings of the IEEE conference on computer vision and pattern recognition, pages 7132–7141, 2018.

- [14] Phillip Isola, Jun-Yan Zhu, Tinghui Zhou, and Alexei A Efros. Image-to-image translation with conditional adversarial networks. In Proceedings of the IEEE conference on computer vision and pattern recognition, pages 1125–1134, 2017.

- [15] Pierre-Marc Jodoin, Lucia Maddalena, Alfredo Petrosino, and Yi Wang. Extensive benchmark and survey of modeling methods for scene background initialization. IEEE Transactions on Image Processing, 26(11):5244–5256, 2017.

- [16] Justin Johnson, Alexandre Alahi, and Li Fei-Fei. Perceptual losses for real-time style transfer and super-resolution. In European conference on computer vision, pages 694–711. Springer, 2016.

- [17] Chongyu Liu, Yuliang Liu, Lianwen Jin, Shuaitao Zhang, Canjie Luo, and Yongpan Wang. Erasenet: End-to-end text removal in the wild. IEEE Transactions on Image Processing, 29:8760–8775, 2020.

- [18] Zhuang Liu, Hanzi Mao, Chao-Yuan Wu, Christoph Feichtenhofer, Trevor Darrell, and Saining Xie. A convnet for the 2020s. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 11976–11986, 2022.

- [19] Ilya Loshchilov and Frank Hutter. Sgdr: Stochastic gradient descent with warm restarts. arXiv preprint arXiv:1608.03983, 2016.

- [20] Guangtao Lyu and Anna Zhu. Psstrnet: Progressive segmentation-guided scene text removal network. In 2022 IEEE International Conference on Multimedia and Expo (ICME), pages 1–6. IEEE, 2022.

- [21] Toshiki Nakamura, Anna Zhu, Keiji Yanai, and Seiichi Uchida. Scene text eraser. In 2017 14th IAPR International Conference on Document Analysis and Recognition (ICDAR), volume 1, pages 832–837. IEEE, 2017.

- [22] Kamyar Nazeri, Eric Ng, Tony Joseph, Faisal Qureshi, and Mehran Ebrahimi. Edgeconnect: Structure guided image inpainting using edge prediction. In Proceedings of the IEEE/CVF International Conference on Computer Vision Workshops, pages 0–0, 2019.

- [23] Jia Qin, Huihui Bai, and Yao Zhao. Multi-scale attention network for image inpainting. Computer Vision and Image Understanding, 204:103155, 2021.

- [24] Olaf Ronneberger, Philipp Fischer, and Thomas Brox. U-net: Convolutional networks for biomedical image segmentation. In International Conference on Medical image computing and computer-assisted intervention, pages 234–241. Springer, 2015.

- [25] Jeyasri Subramanian, Varnith Chordia, Eugene Bart, Shaobo Fang, Kelly Guan, Raja Bala, et al. Strive: Scene text replacement in videos. In Proceedings of the IEEE/CVF International Conference on Computer Vision, pages 14549–14558, 2021.

- [26] Osman Tursun, Simon Denman, Rui Zeng, Sabesan Sivapalan, Sridha Sridharan, and Clinton Fookes. Mtrnet++: One-stage mask-based scene text eraser. Computer Vision and Image Understanding, 201:103066, 2020.

- [27] Osman Tursun, Rui Zeng, Simon Denman, Sabesan Sivapalan, Sridha Sridharan, and Clinton Fookes. Mtrnet: A generic scene text eraser. In 2019 International Conference on Document Analysis and Recognition (ICDAR), pages 39–44. IEEE, 2019.

- [28] Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N Gomez, Łukasz Kaiser, and Illia Polosukhin. Attention is all you need. Advances in neural information processing systems, 30, 2017.

- [29] Hao Wang, Xiang Bai, Mingkun Yang, Shenggao Zhu, Jing Wang, and Wenyu Liu. Scene text retrieval via joint text detection and similarity learning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 4558–4567, 2021.

- [30] Yuxin Wang, Hongtao Xie, Shancheng Fang, Yadong Qu, and Yongdong Zhang. Pert: A progressively region-based network for scene text removal. arXiv preprint arXiv:2106.13029, 2021.

- [31] Liang Wu, Chengquan Zhang, Jiaming Liu, Junyu Han, Jingtuo Liu, Errui Ding, and Xiang Bai. Editing text in the wild. In Proceedings of the 27th ACM international conference on multimedia, pages 1500–1508, 2019.

- [32] Jan Zdenek and Hideki Nakayama. Erasing scene text with weak supervision. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, pages 2238–2246, 2020.

- [33] Shuaitao Zhang, Yuliang Liu, Lianwen Jin, Yaoxiong Huang, and Songxuan Lai. Ensnet: Ensconce text in the wild. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 33, pages 801–808, 2019.

- [34] Hengshuang Zhao, Jianping Shi, Xiaojuan Qi, Xiaogang Wang, and Jiaya Jia. Pyramid scene parsing network. In Proceedings of the IEEE conference on computer vision and pattern recognition, pages 2881–2890, 2017.